Multi-modal monitoring systems

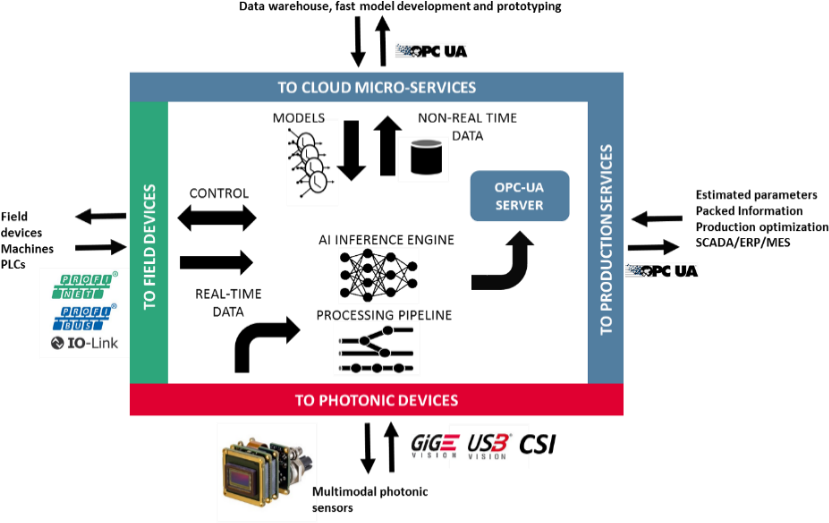

In order to manage the big amount of data from the spectral sensors and to stress the most of their potential, MULTIPLE will develop smart photonic -IoT native- monitoring devices. These edge devices will implement novel real-time processing pipelines based on Deep Learning, enabling processing of high dimensionality data like hyperspectral datacubes for different operations in the manufacturing industrial environment, with minimal engineering intervention.

Each monitoring system will be equipped with an AI capable edge computing device based on latest system-on-chip boards (e.g. SoC ARM/GPU), providing functionalities for data collection, pre- processing, AI inference, control and ubiquitous communications.

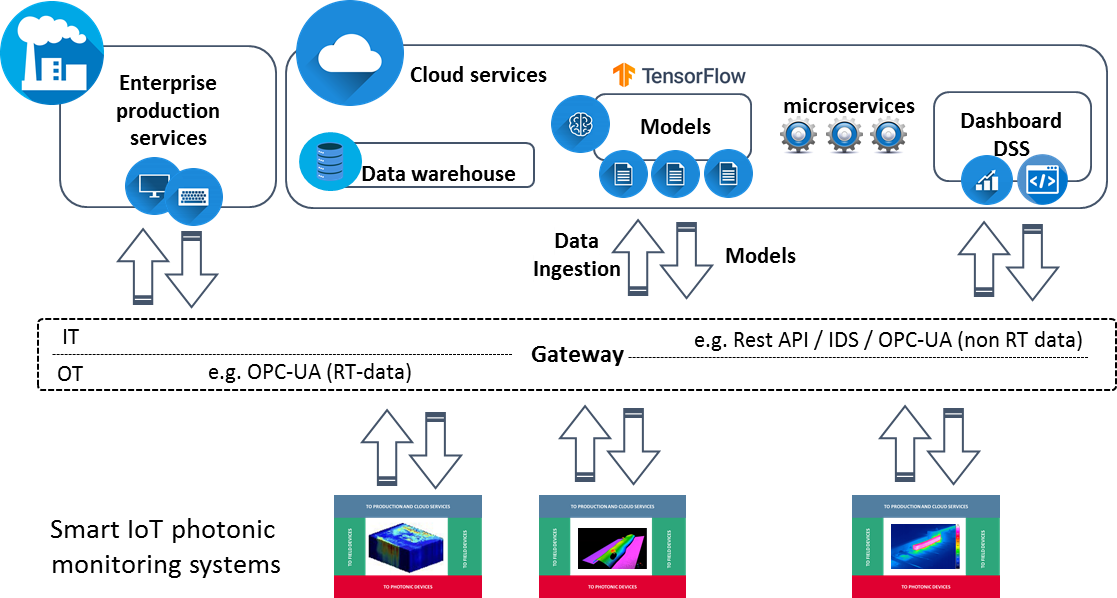

Communication with other monitoring solutions, field devices or production management applications and services will be provided through standards defined in Industry 4.0 (RAMI4.0 model2). e.g. the adoption of OPC-UA will allow machine-readable, semantically unambiguous self-description of properties and capabilities, together with secure machine-to-machine communication and data exchange.

The embedded system will use the extracted information to make decisions, e.g. actuating over the field devices in closed-loop control, or to feed the production management services only with a meaningful and relevant stream of features. The core of the processing pipeline will rely on an AI inference engine enabling the deployment of high performance deep neural models using seamless cloud services. This approach will foster the re-configurability capabilities of the monitoring system reducing the engineering efforts when facing a new inspection problem, replacing the need of custom algorithm re-programming and the big challenge of manual feature extraction by simply training and deploying a new deep model.

Going beyond current machine vision and spectral monitoring solutions -which are focused on monitoring a specific operation at a specific point-, AI models will be trained to recognise anomalies in data, and continuously improve the performance. Moreover, the increased capability of data generation (e.g. hyperspectral imaging sensors) and deep learning requires mechanisms for supporting scalable data management (i.e. data ingest, analysis, and storage), demanding flexible cloud architectures for a fast development and retraining of models.

Based on a micro-services architecture in the cloud and existing open source platforms, MULTIPLE pursues:

- Providing a scalable data warehouse to ingest, store, and manage (i.e. search and analysis) heterogeneous photonic multimodal data and other production data, and the capability for real-time detection of anomalies.

- Enabling the fast development of deep models and inter-related models combining data from different monitoring devices, and production floor observations (i.e. annotations).

- Providing the infrastructure to seamless deploy such deep learning models in the edge computing devices in an industrial environment for a granular monitoring and control of production.

MULTIPLE will develop smart edge computing devices with AI embedded processing capabilities interfacing the photonic sensors, other existing sensors/actuators through industrial fieldbuses, and the cloud. These embedded devices will process the spectral data in real time to extract relevant parameters using machine learning and AI models, and provide a meaningful flow of information for production optimisation and management. Cloud micro-services and a scalable data warehouse will be implemented to support the fast development, retraining, and deployment of AI models.

- To develop smart edge computing devices based on state-of-art SoC boards capable of deploying AI models.

- To develop a scalable data warehouse for data streaming, ingestion, and management.

- To implement real-time embedded algorithms for multispectral measurements.

- To implement a real-time inference engine to deploy deep learning models.

- To develop the cloud platform for supporting fast prototyping, retraining and deployment of deep learning models.